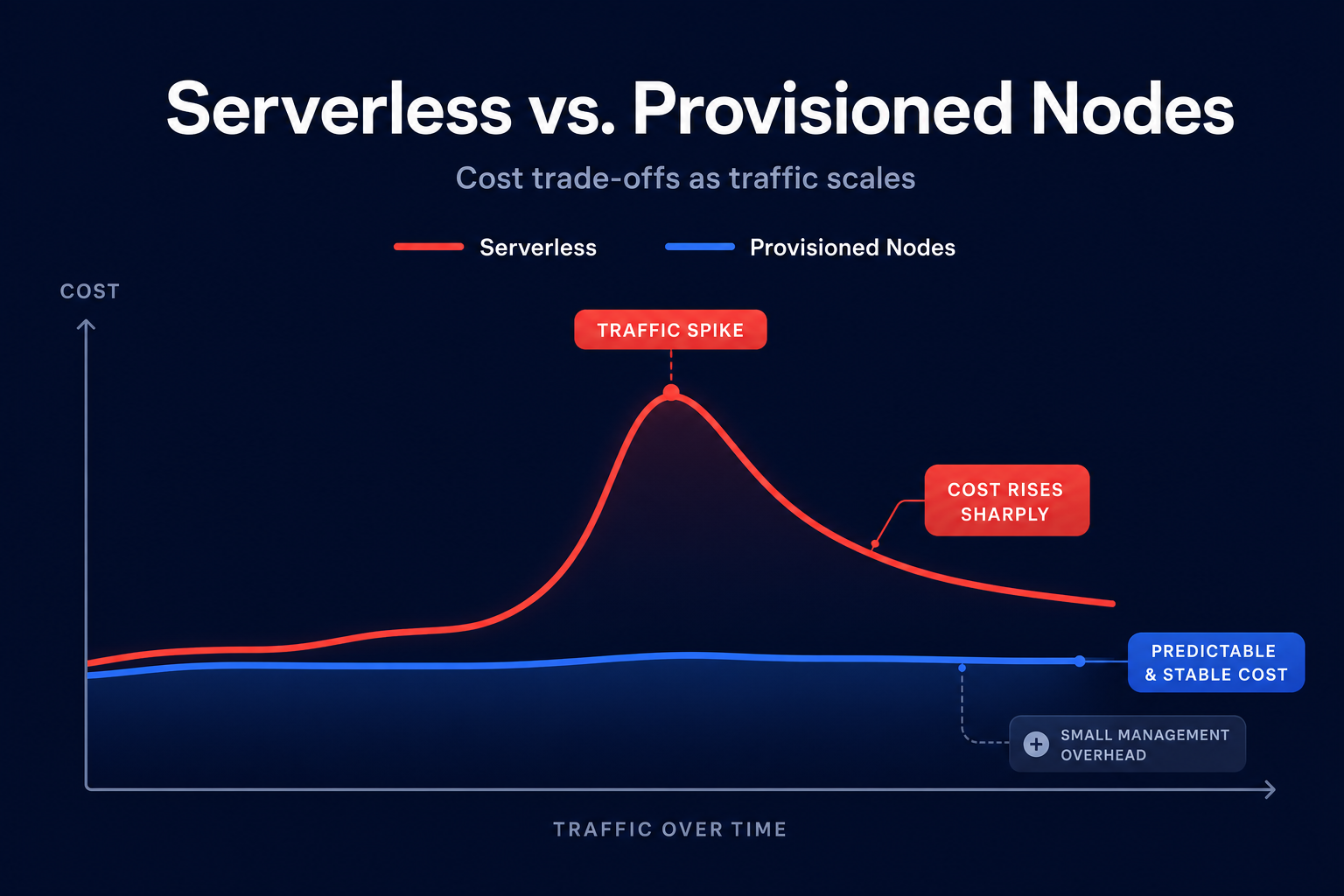

Are you paying for idle memory in your caching layer just to handle a hypothetical traffic spike? Choosing between Amazon ElastiCache Serverless and provisioned nodes is a financial decision that can swing your AWS bill by thousands of dollars.

Understanding the core pricing dimensions

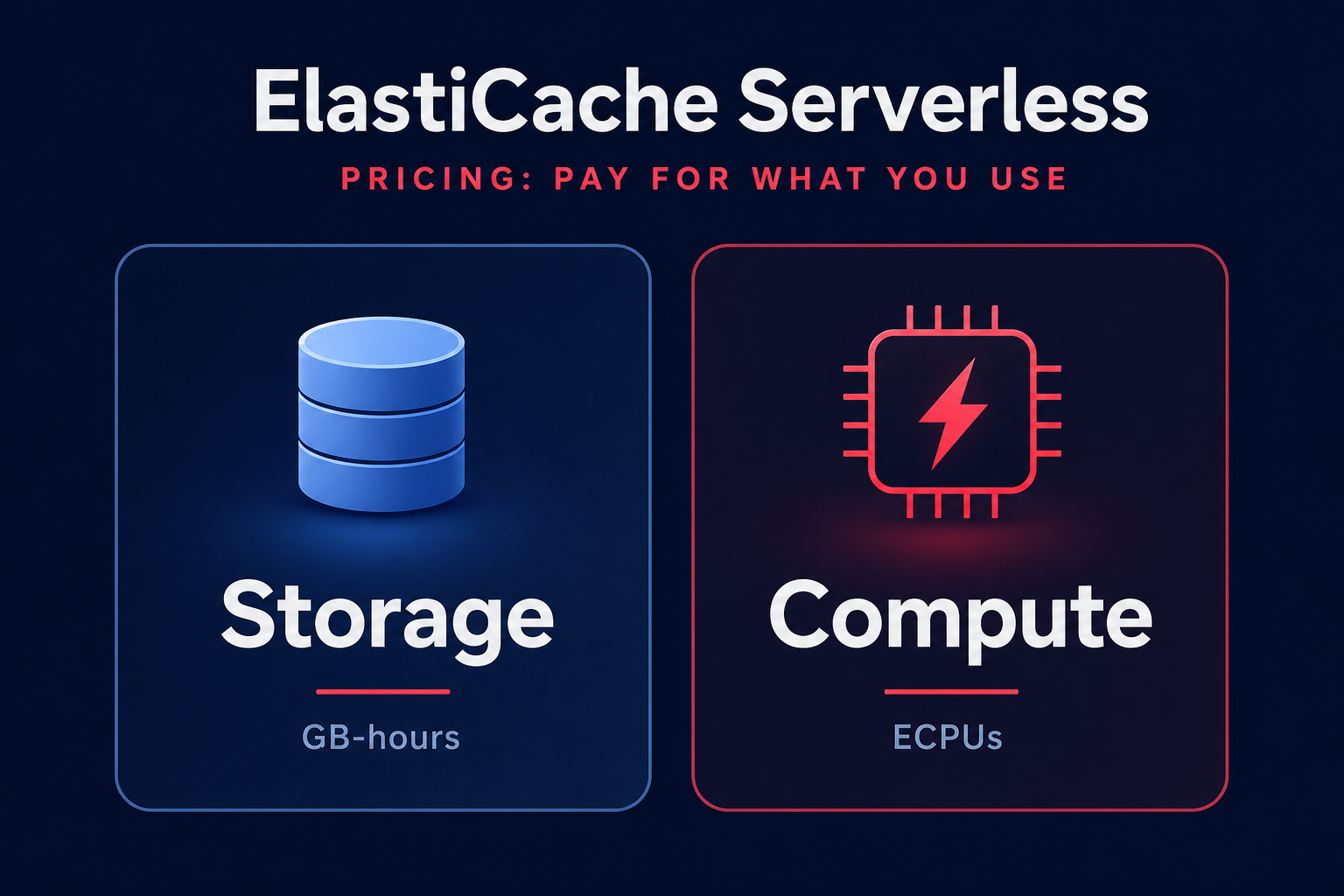

The introduction of Amazon ElastiCache Serverless fundamentally changed how you pay for managed Valkey and Redis OSS. In the serverless model, AWS abstracts the underlying infrastructure, charging you based on two primary dimensions: data storage measured in GB-hours and compute usage measured in ElastiCache Processing Units (ECPUs). This removes the need to guess which instance size you need, but it introduces variable costs that scale directly with every request your application makes.

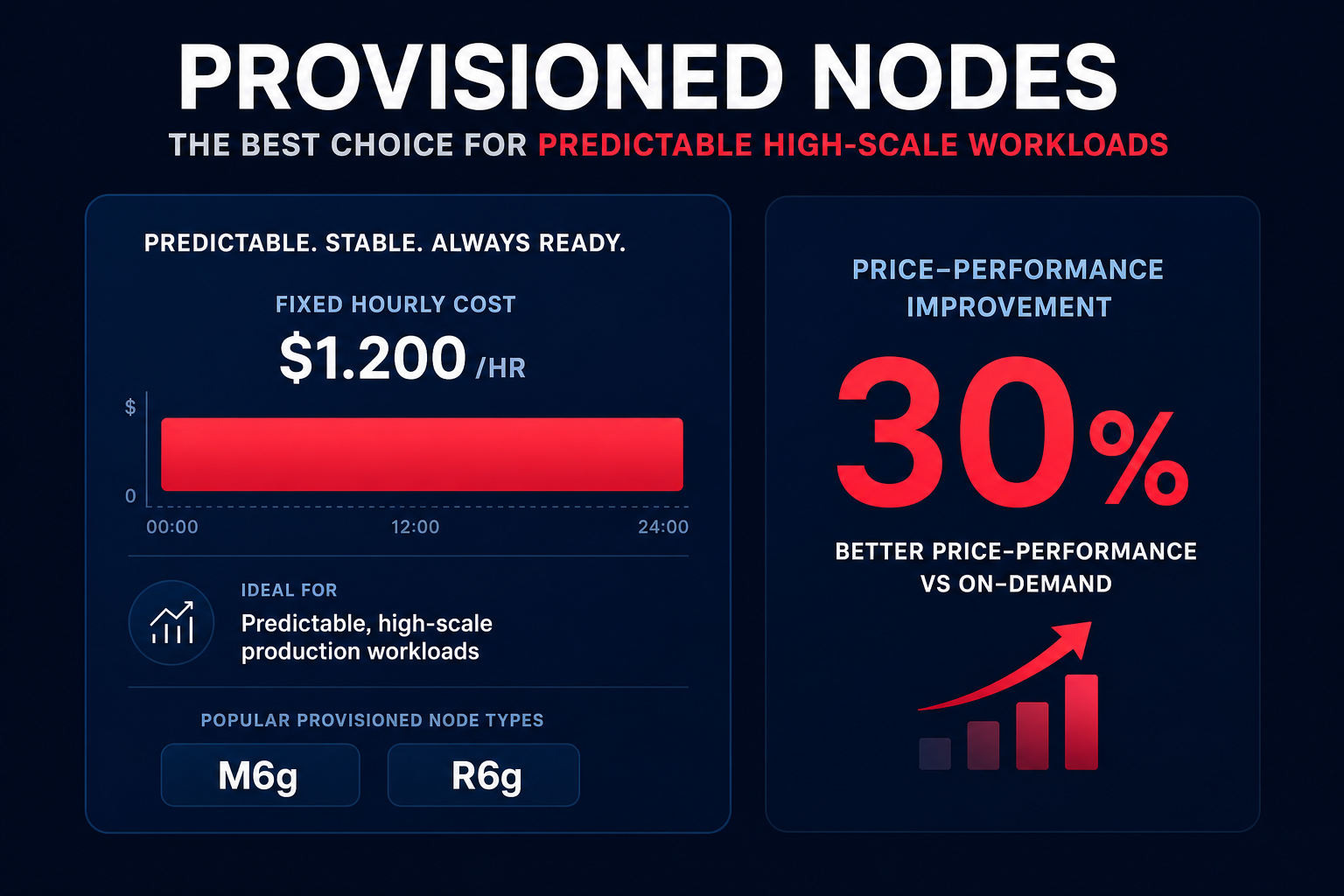

Provisioned nodes follow the traditional model where you pay a fixed hourly rate for a specific instance type, such as an `m6g.xlarge` or `r7g.2xlarge`. While this provides a predictable baseline, you are responsible for the costs of over-provisioning. If your nodes sit at 20% utilization, you are still paying for 100% of the capacity. However, for steady-state workloads, provisioned nodes can be significantly cheaper, especially when you leverage Reserved Nodes. Committing to a one-year or three-year term can slash your costs by up to 60% compared to on-demand pricing.

When to choose ElastiCache Serverless

Serverless is the optimal choice for applications with unpredictable traffic patterns or those in the early stages of development where the workload is difficult to forecast. It eliminates the operational burden of automatic cloud resource scaling, as AWS automatically accommodates traffic as it ramps up or down without manual intervention.

From a cost-efficiency standpoint, serverless is particularly attractive for small workloads and microservices. Consider these advantages:

- Valkey serverless storage is metered at a minimum of 100 MB per cache, compared to a 1 GB minimum for Redis OSS.

- ElastiCache for Valkey on serverless is priced 33% lower than Redis OSS.

- High availability is included by default, with data stored redundantly across three Availability Zones and a 99.99% availability SLA.

For a new service where you want the easiest way to get started, serverless removes the risk of paying for a large node that goes unused or crashing a small node during a sudden traffic influx.

The financial case for provisioned nodes

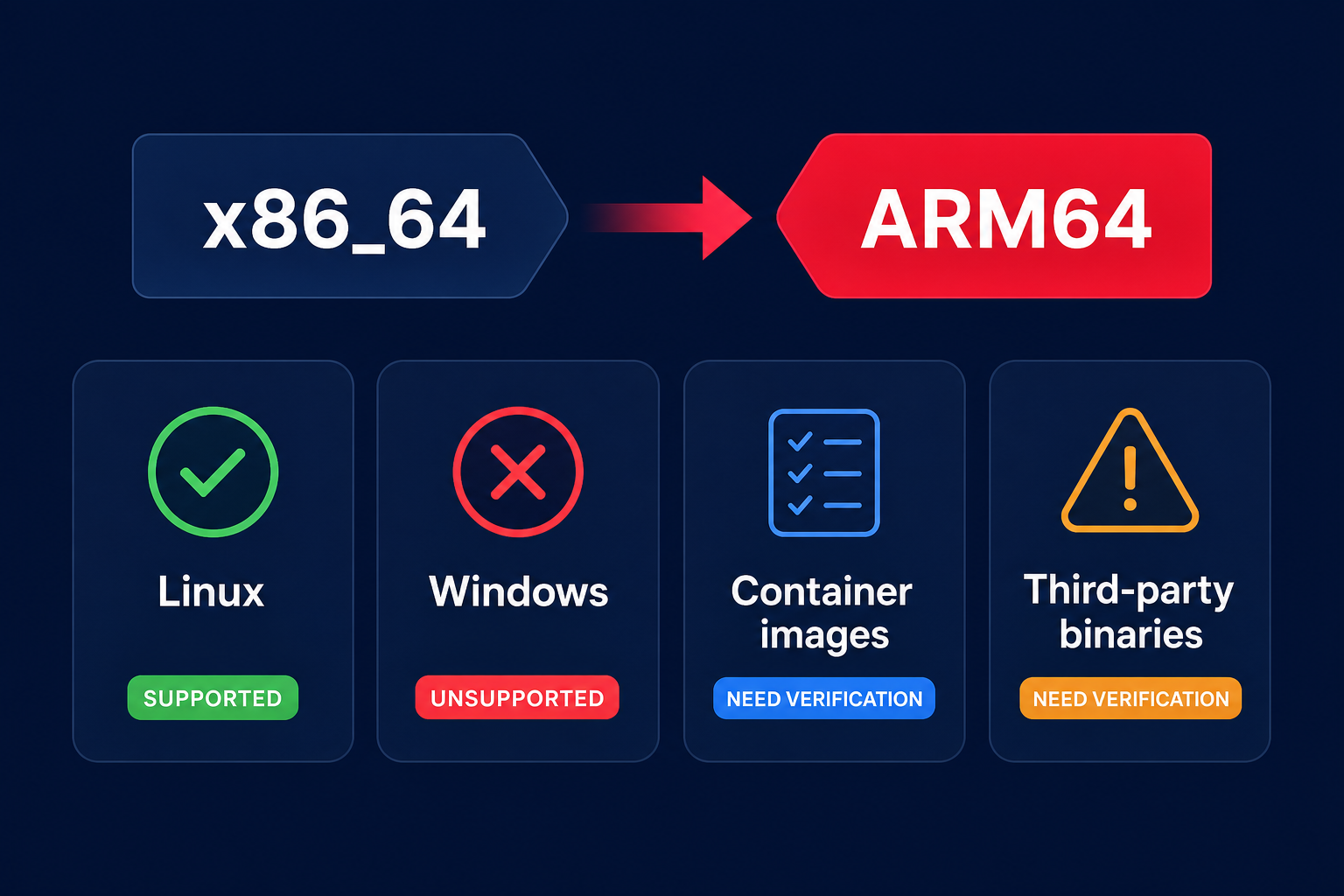

If your workload is predictable and runs at a high scale, provisioned nodes often provide a better return on investment. When you have fine-grained control over node type and count, you can optimize the cache for your specific memory-to-CPU ratio. For instance, upgrading to Graviton-based nodes like the M6g or R6g families can result in 40% to 60% better price-to-performance for memory-bound workloads.

Provisioned nodes are also the only way to utilize data tiering with R6gd nodes. This feature offloads less-frequently accessed data to local NVMe SSDs, which is incredibly effective for datasets larger than several hundred gigabytes where only 20% of the data is “hot.” By combining this with cloud resource rightsizing – where you maintain approximately 25% memory headroom – you can achieve a much lower cost-per-gigabyte than the flat serverless storage rate. Additionally, using provisioned nodes allows you to avoid the Extended Support premium for older Redis OSS versions, which can increase costs by up to 160% by year three.

Managing the trade-offs at scale

The primary financial risk with serverless is the ECPU cost during massive traffic spikes. While Valkey ECPUs are cheaper at $0.0023 per million compared to $0.0034 for Redis OSS, a high-throughput application doing billions of requests per day can quickly outpace the cost of a dedicated cluster. Conversely, provisioned nodes carry a “management tax.” Your team must spend time monitoring metrics like `DatabaseMemoryUsagePercentage` and `CPUUtilization` to ensure the cluster remains stable during peak demand.

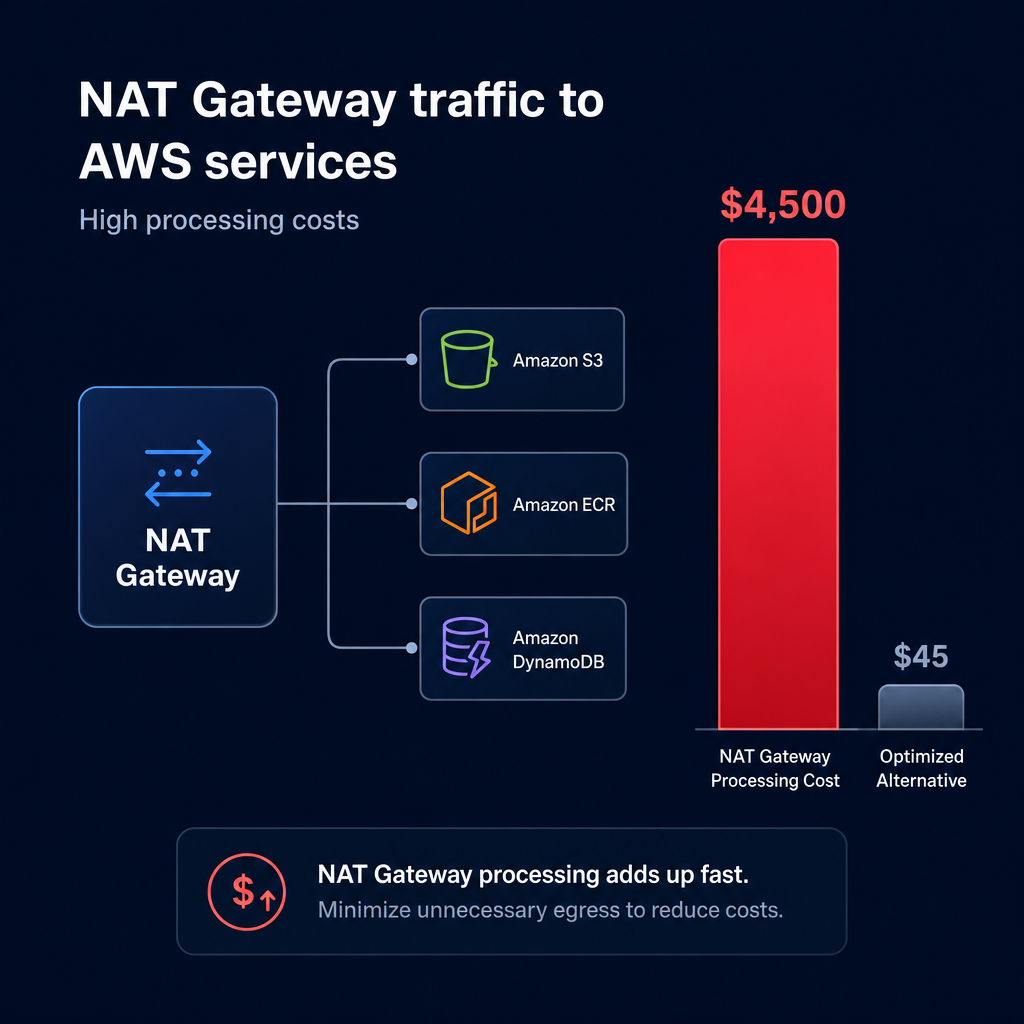

To avoid unexpected charges in either model, you should implement AWS Cost Explorer vs AWS Budgets strategies. Budgets can alert you when serverless ECPU consumption exceeds your monthly forecast, while Cost Explorer helps you identify the exact moment it becomes more cost-effective to transition a growing serverless cache into a provisioned cluster to lock in lower rates.

Automating your ElastiCache savings

Navigating the nuances of ElastiCache pricing does not have to be a manual burden for your DevOps team. Whether you choose the flexibility of serverless or the raw performance of provisioned nodes, your underlying commitment strategy determines your actual effective savings rate. Hykell specializes in automated AWS rate optimization, using AI to blend Savings Plans and Reserved Instances to capture maximum discounts without compromising your infrastructure’s agility.

We provide real-time observability that allows you to drill down from a spend spike to a specific resource ID in seconds. By analyzing CPU, memory, and I/O usage, we help you reduce your AWS bill by up to 40% while ensuring your caching layer remains performant.

Ready to see how much you could save on your AWS bill? Use our cloud cost calculator to discover your potential for a 40% reduction in spend on autopilot. If we don’t find savings, you don’t pay.